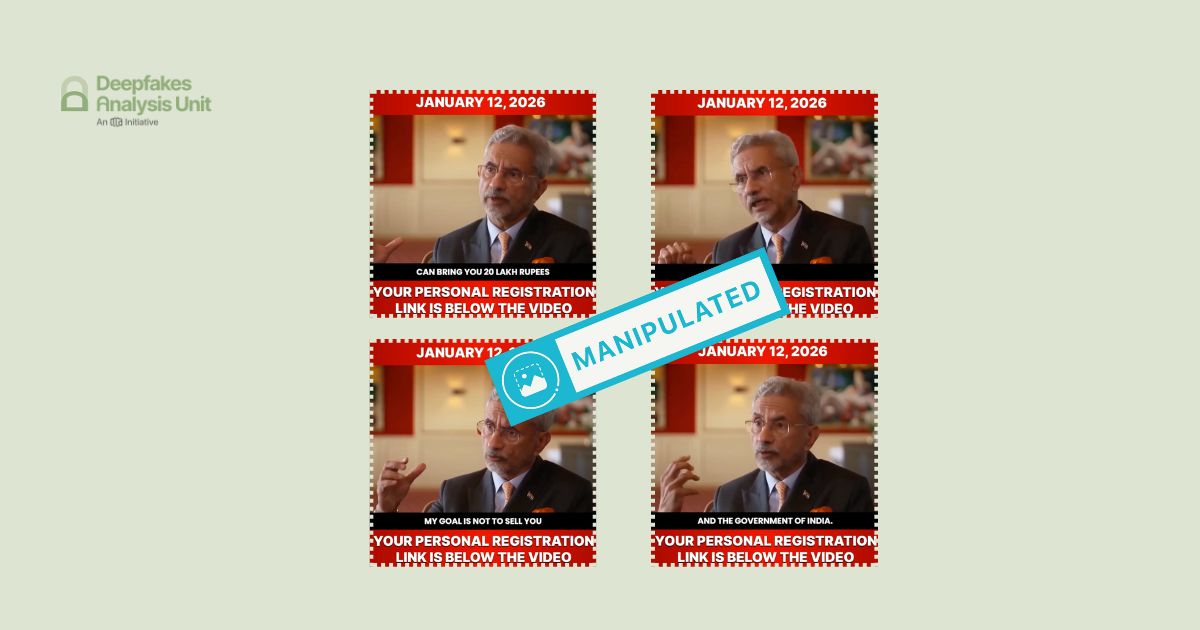

The Deepfakes Analysis Unit (DAU) analysed a video that apparently shows Gen. Upendra Dwivedi, India’s Chief of Army Staff, supposedly declaring Israel as India’s representative in the “Gaza Peace Board” while opposing Pakistan’s inclusion in the same. After putting the video through A.I. detection tools and getting our expert partners to weigh in, we were able to conclude that the video was manipulated with synthetic audio.

The 42-second video in English was discovered by the DAU during social media monitoring. It was embedded in a post on X, formerly Twitter, by an account withheld in India in response to a legal demand; we will refrain from sharing further details about the account. However, we were able to access an archived version of the video on a partner fact-checker’s website. We do not have any evidence to suggest whether the suspicious video originated from an account on X or elsewhere.

The fact-checking unit of the Press Information Bureau (PIB), which debunks misinformation related to the Indian government, posted a fact-check for the video from their verified handle on X.

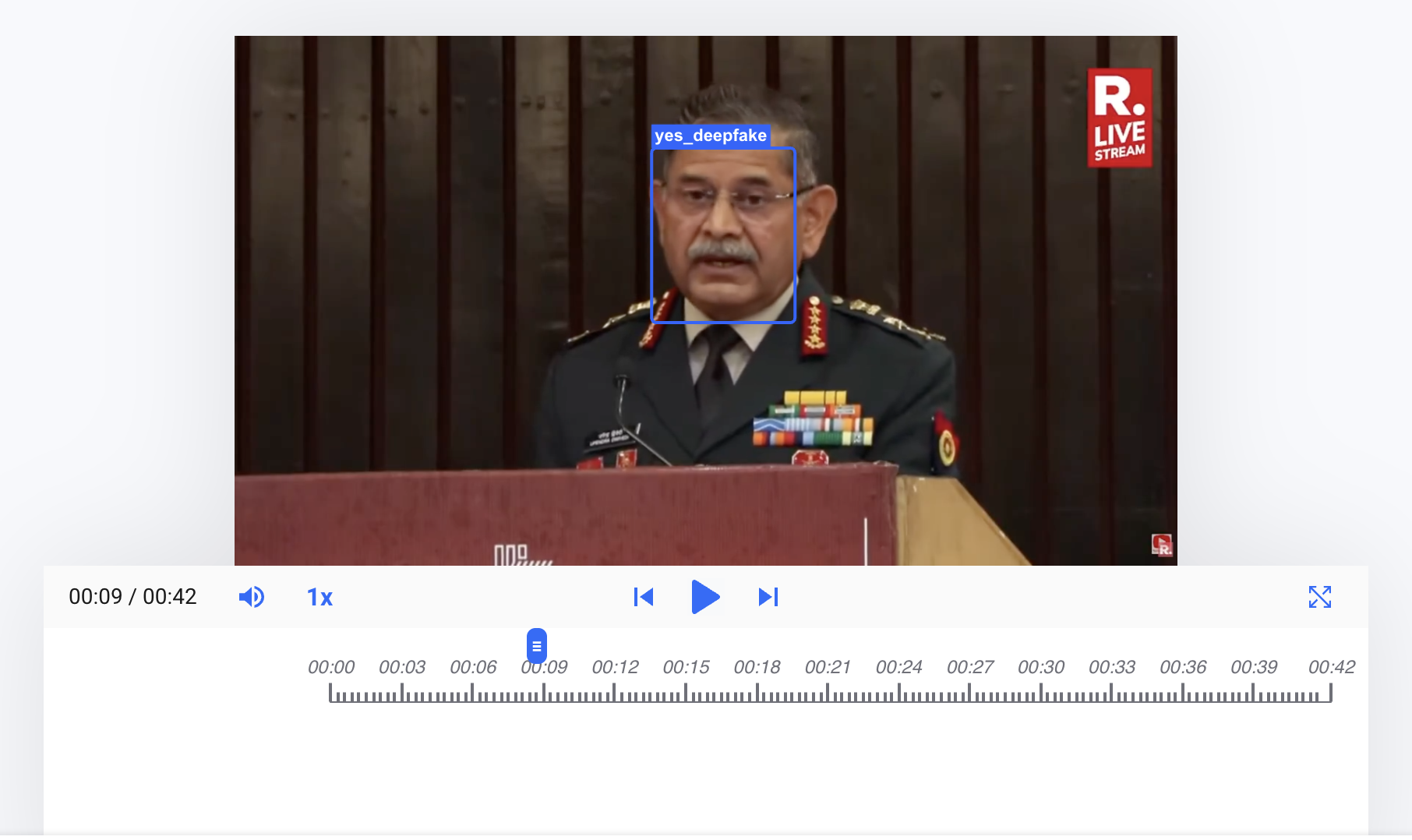

The video opens with General Dwivedi in a medium close-up. His backdrop is wood-panelled and he appears to be standing behind a lectern with a microphone; giving the impression that he is addressing an audience. His head and gaze shift in different directions, including downwards, as if reading from something.

A logo resembling that of Republic Media Network, an Indian news network, is visible in the top right corner of the video frame. The word “livestream” can be seen as part of the logo, which is a combination of bold text in white set against a red background. In the bottom right corner, another logo also similar to the network’s logo seems to overlap with a logo resembling that of YouTube.

A male voice recorded over the general’s video track claims that “India does not need to be a part of Gaza Peace Board” as it is “being represented by Israel there”. It further states that India opposes Pakistan’s inclusion as “Pakistan will take the side of Gaza and Hamas” and that “will go against Israel”.

The same voice further adds that Pakistan's “role” in the “Gaza Peace Board needs to be exposed”. It warns that “Pakistan might use this platform to legitimise Kashmir cause in the future”, which “will create troubles on our borders”. The video ends with the voice declaring that “Pakistan is a terrorist country and needs to be shown its place”.

The overall video quality is good. The lip movements of the purported speaker synchronise well with the audio track. His lower set of teeth appear blurred in some frames, disappear in a few others, and lack consistent shape throughout the video; the upper set of teeth is not visible. The rim of his glasses seems to meld into his skin at a few instances in the video.

We compared the voice attributed to the general in the video with his recorded speeches available online. The diction and accent sound similar but the intonation and tempo of the speech vary between the voices. His characteristic delivery is faster with a certain cadence as opposed to the voice heard in the audio track, which sounds mellow and scripted. An echo can be heard throughout the audio track.

We undertook a reverse image search using screenshots from the video and traced the general’s clip to this video published on Jan. 22, 2026 from the official YouTube channel of Republic World, which is part of the Republic Media Network.

The general’s clothing, backdrop, and body language are the same in the video we reviewed and the one we traced. The audio in both videos is in English but the content is different.

Logos of Republic Media Network and YouTube are visible in the source video as well. However, unlike the case in the doctored video the logos do not overlap, and the size of the Republic logo at the bottom right corner is slightly bigger in the source video.

The apparent melding of the rim of the general’s glasses into his skin, and the echo in the audio track are not noticeable anywhere in the source video unlike the doctored video. However, some ambience sound can be heard in the source video, which is absent in the doctored video.

It appears that a clip from the source video has been lifted and used to create the doctored video as it has no visible jump cuts or transitions and looks fairly seamless.

To discern the extent of A.I. manipulation in the video we reviewed, we put it through A.I. detection tools.

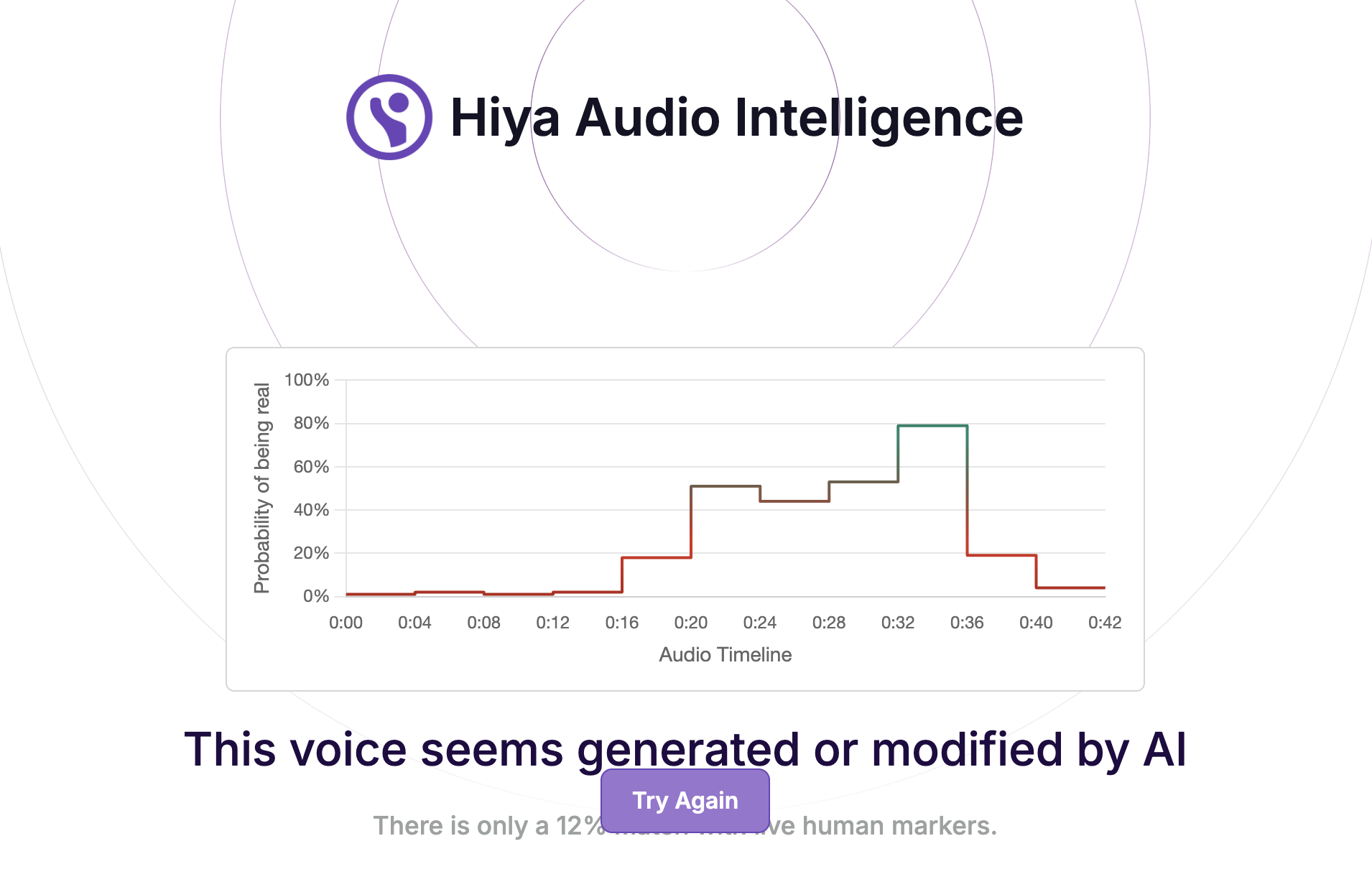

The voice tool of Hiya, a company that specialises in artificial intelligence solutions for voice safety, indicated that there is an 88 percent probability that the audio track in the video was generated or modified using A.I.

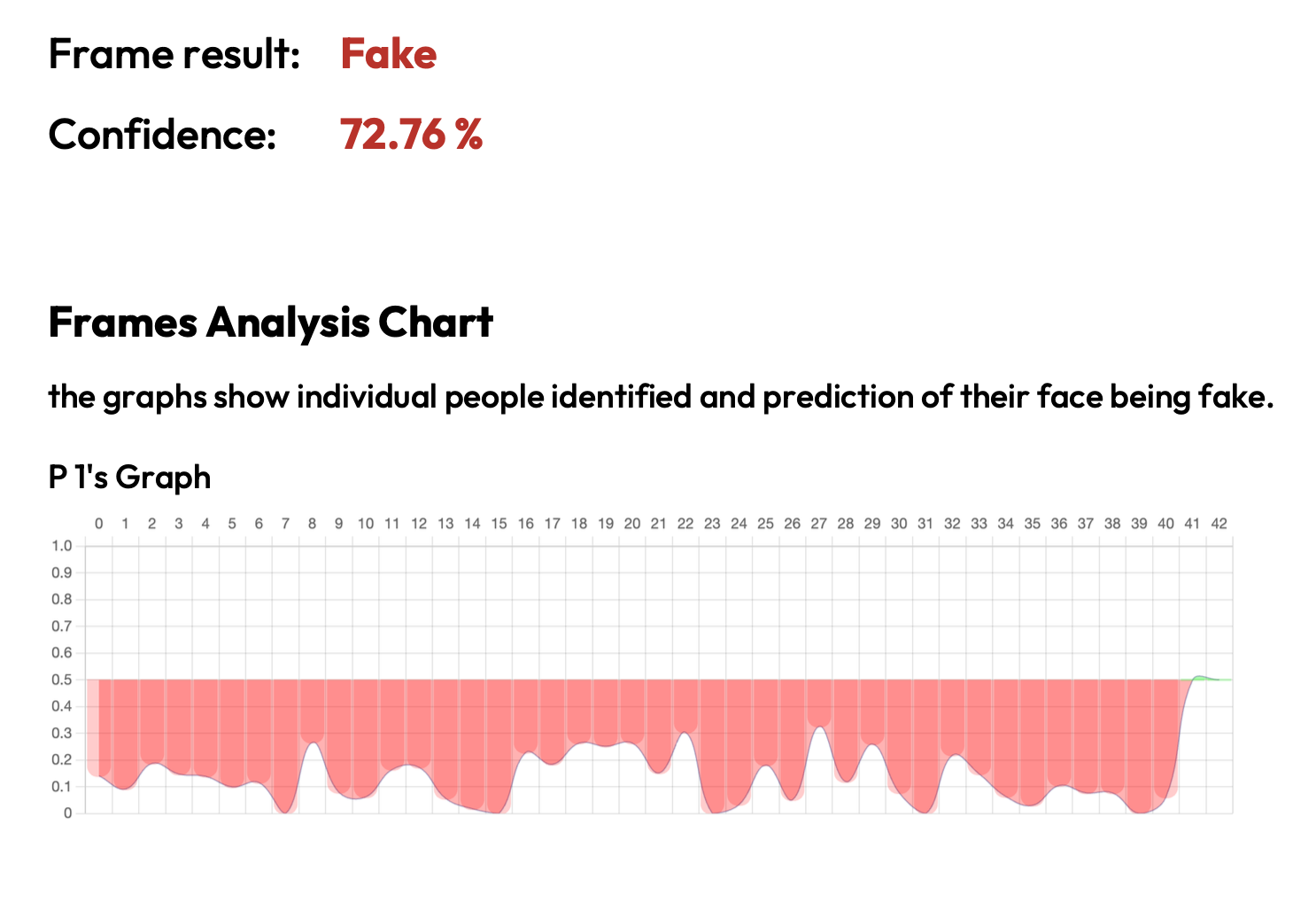

Hive AI’s deepfake video detection tool highlighted several markers of A.I. manipulation in the video track. However, their audio detection tool indicated that only a 10-second segment is A.I.-generated in the entire audio track.

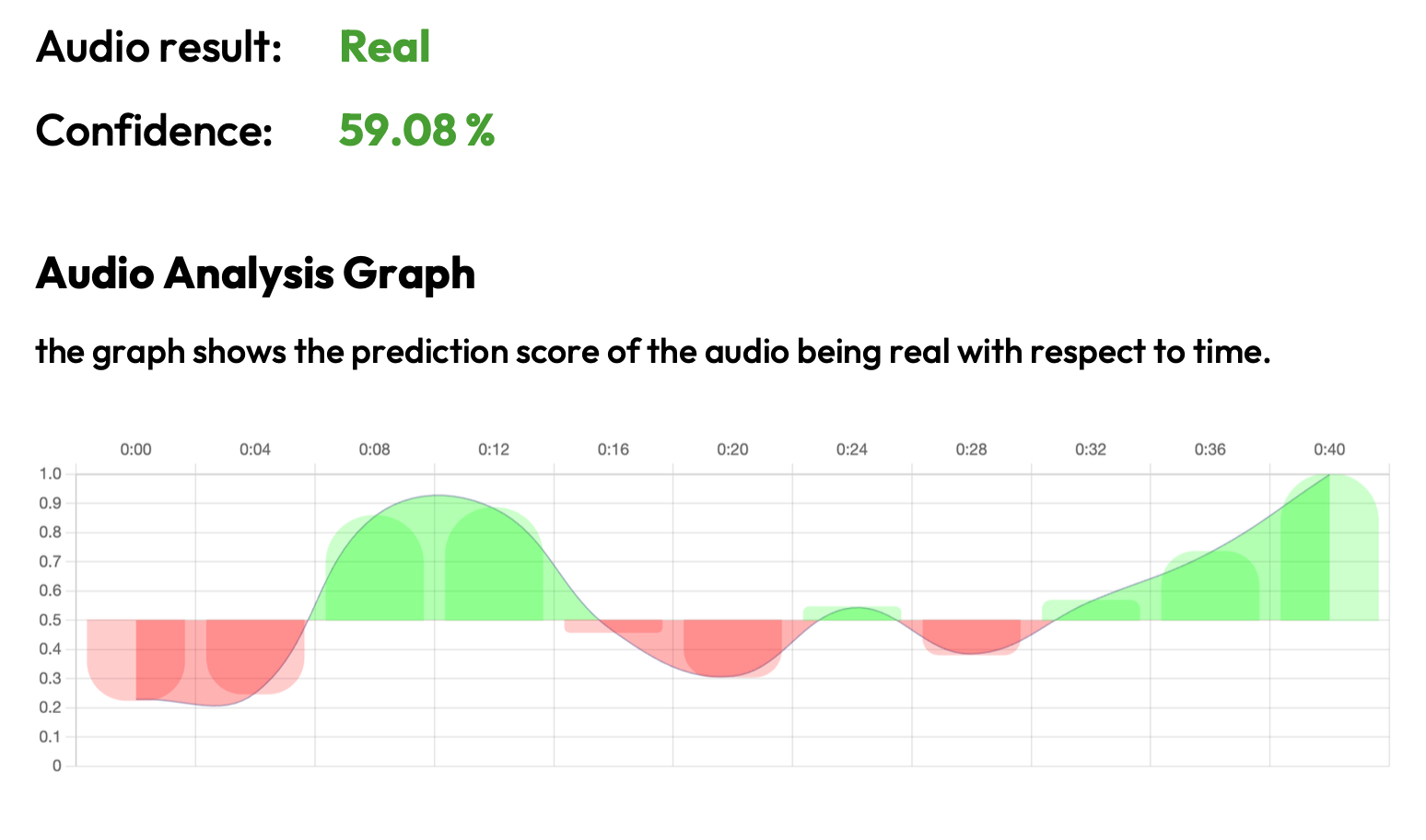

We ran the audio track through the advanced audio deepfake detection engine of Aurigin.ai, a Swiss deeptech company. The results indicated 76 percent confidence in the audio track being partially A.I.-generated.

We also put the audio track through the A.I. speech classifier of ElevenLabs, a company specialising in voice A.I. research and deployment. The results that returned indicated that it is “very unlikely” that the audio track used in the video was generated using their platform. However, a further analysis by the team established that the audio track is synthetic or A.I.-generated.

To get an analysis on the video we reached out to Contrails AI, a Bangalore-based startup with its own A.I. tools for detection of audio and video spoofs.

The team ran the video through audio and video detection models. The results that returned indicated manipulation in the video track but gave low confidence to manipulation in the audio track.

They stated that a lip-sync or lip-reanimation based A.I. technique has been used to synchronize the subject’s mouth area with the audio track. They added that some artefacts are visible near the subject’s moustache region.

They noted that their audio spoof detection model may have given a false prediction. They explained that through qualitative analysis they were able to establish that the audio sounds highly monotonous; this indicated to them that it could be a studio recording or voice cloning using A.I.

To get further expert analysis on the video, we escalated it to the Global Online Deepfake Detection System (GODDS), a detection system set up by Northwestern University’s Security & AI Lab (NSAIL). The video was analysed by two human analysts and run through 22 deepfake detection algorithms for video analysis; and 70 deepfake detection algorithms for audio analysis.

Of the 22 predictive models, two gave a higher probability of the video being fake and the remaining 20 gave a lower probability of the video being fake. Of the 70 predictive models, one gave a higher probability of the audio being fake, while the remaining 69 gave a lower probability of the audio being fake.

In their report, the team noted several timecodes where the border of the subject’s glasses frequently blurs into his face. They also pointed out that in various frames it appears that his teeth change shape, and often disappear and reappear within seconds, as we mentioned above.

They also highlighted an instance in the video where as the subject appears to speak his chin intersects with his shirt collar and it seems as if that collar is dividing his chin. They added that the subject’s voice seems to lack natural tone and cadence variations characteristic of human voices, something we pointed to above as well.

Sharing other timecodes, the team pointed to instances in the video where the subject's mouth movements occasionally appear to pause or stiffen mid-sentence. However, they explained that when this happens, the audio remains unnaturally steady and doesn’t reflect these minor pauses. In conclusion, the team stated that the video is likely manipulated via artificial intelligence.

On the basis of our observations and expert analyses, we can conclude that the video is fabricated. An A.I.-generated audio clip was used with the general’s visuals to peddle a false narrative about India’s supposed representation in the “Gaza Peace Board” and its opposition to Pakistan’s inclusion.

(Written by Debraj Sarkar and Debopriya Bhattacharya, edited by Pamposh Raina.)

Kindly Note: The manipulated audio/video files that we receive on our tipline are not embedded in our assessment reports because we do not intend to contribute to their virality.

You can read the fact-checks related to this piece published by our partners:

AI Clip of COAS Dwivedi Viral as Criticizing Pak’s Entry on Gaza Peace Board

Video of COAS Criticising Pakistan's Entry On US Peace Board Is A Deepfake